Konstantin Semenenko

March 6, 2026

6

minutes read

Repeated CPU-heavy work turns runtime choice into an energy and carbon decision. Here is why C# is usually a lower-energy managed-language option than Python and Node.js, where that claim holds, and where it breaks.

Teams rarely notice they chose a dirtier runtime on launch day. The signal arrives later, when the batch window slips, the queue drains slower than expected, or the fix for rising CPU is one more replica. On a hot service that extra replica is not just a cost line. It is more electricity spent to produce the same useful result, which means the runtime has become part of the system's carbon footprint whether anyone says so or not.

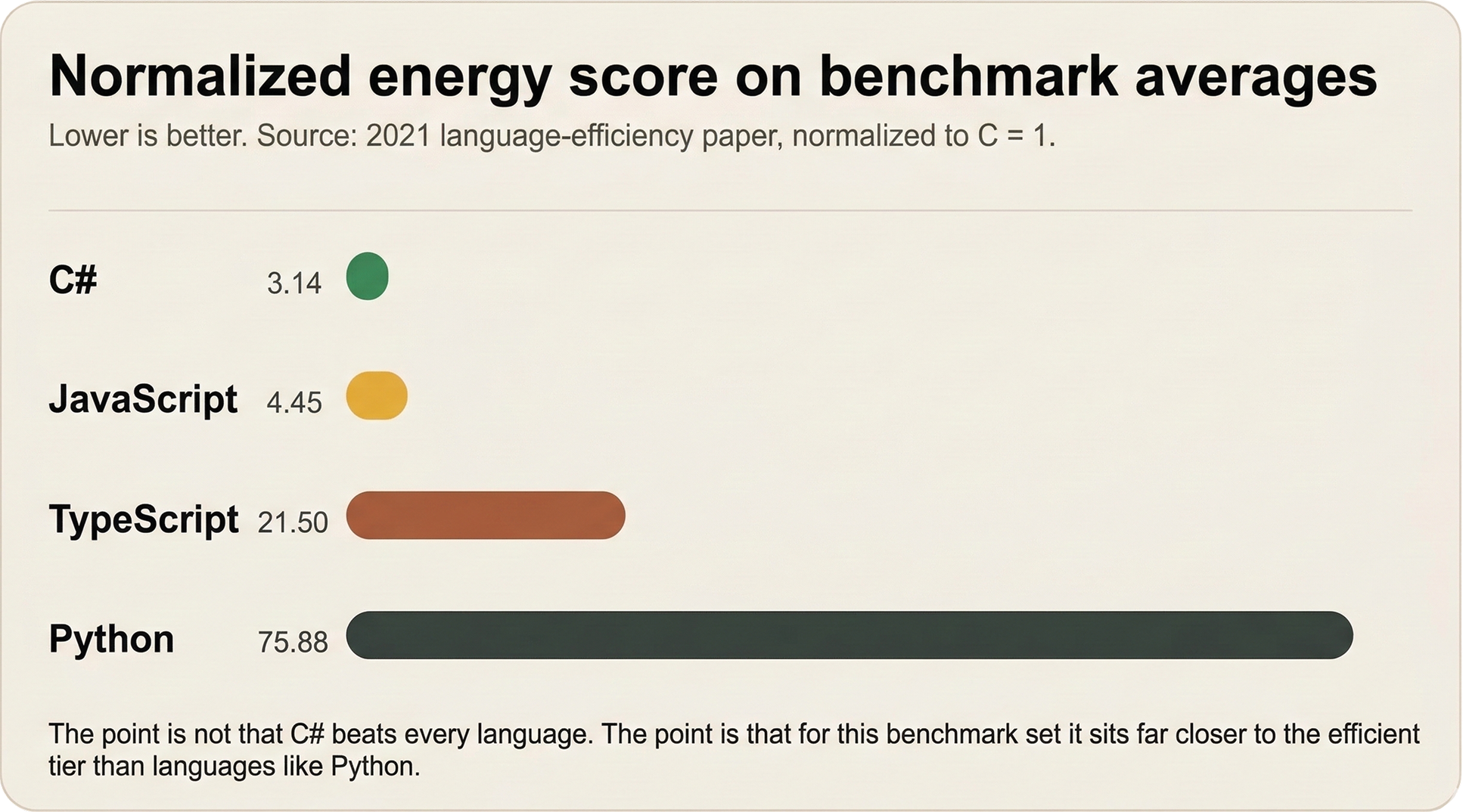

That is why c# vs python energy consumption matters beyond language taste, and why c# vs node.js energy consumption becomes a real operating question once the same CPU-heavy path runs all day on comparable hardware. Many teams first search c# vs python performance or c# vs node.js performance and only later realize that performance is the entry point to an energy problem. A 2021 benchmark study covering 27 languages placed C# far ahead of JavaScript, TypeScript, and Python on normalized energy use, while a 2022 USENIX runtime study showed V8/Node.js and Python carrying heavy overhead on compute-bound workloads. C# does not lead the entire field, and it should not be sold as a universal answer. It is still one of the strongest mainstream managed-runtime choices when repeated hot paths are expensive enough for wasted CPU time to become wasted electricity.

This article covers one narrow but common case: the same CPU-heavy work repeated on comparable hardware. In that case, C# is usually the greener managed-runtime choice because it completes the functional unit with less CPU time, less energy, and less pressure to add hardware than Python or Node.js.

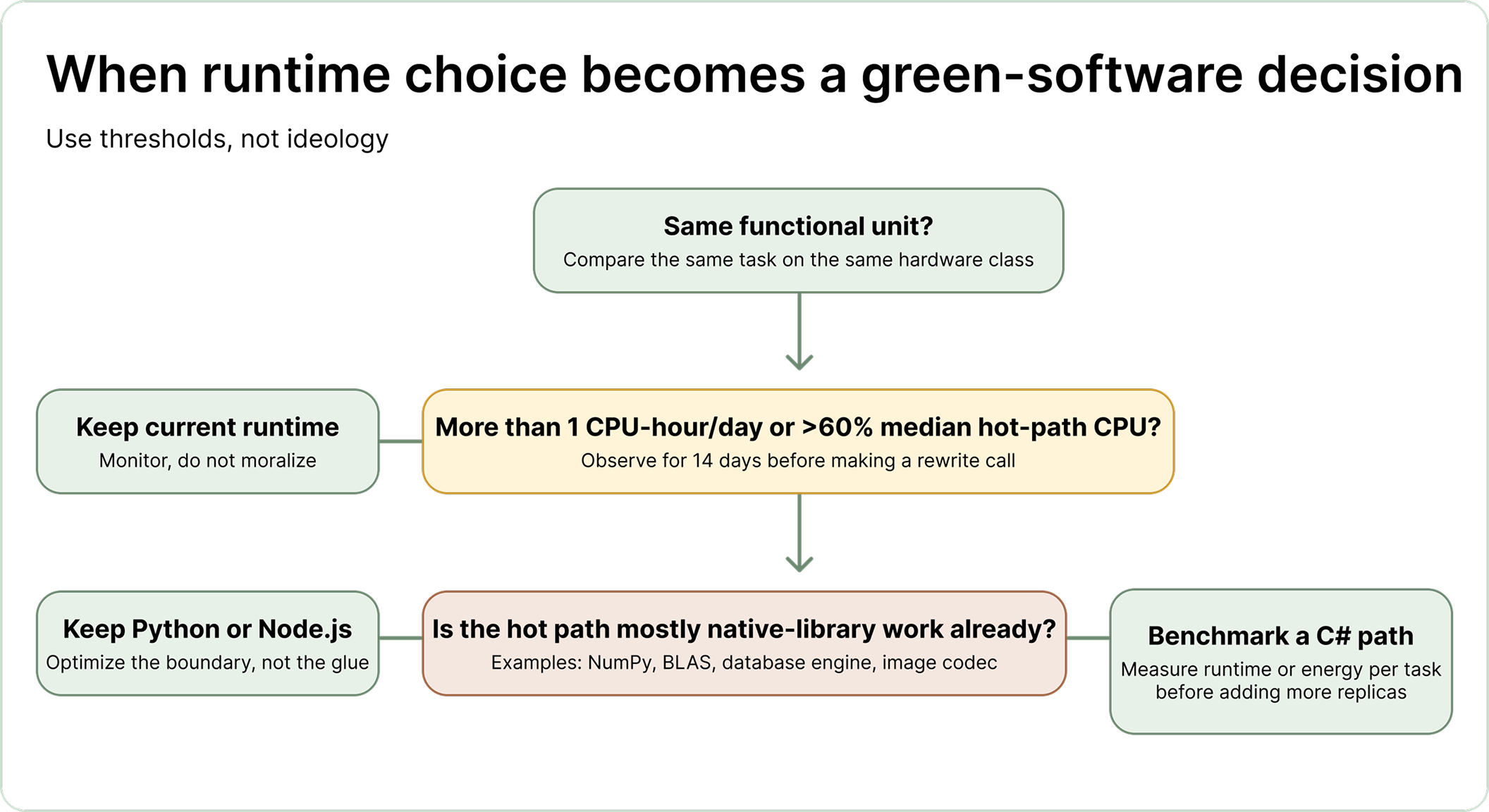

Keep Python when it mostly orchestrates fast native libraries. Keep Node.js when the service is mostly waiting on sockets, files, queues, or databases. Start a rewrite benchmark when the hot path burns more than one CPU-hour a day or median hot-path CPU stays above sixty percent over a two-week window. That threshold matters more than ideology because it turns a language argument into an operating decision with a metric, an owner, and a deadline.

Choose C# when the runtime itself owns the expensive loop. Keep Python or Node.js when they are mostly coordinating work done elsewhere or when the service spends most of its life waiting rather than computing.

That usually means C# for background processors, parsers, scheduling engines, data transformation services, pricing pipelines, recommendation jobs, and API endpoints that stay hot for most of the day. Python remains a reasonable choice when it is glue around optimized native libraries, database engines, codecs, or numerical kernels. Node.js remains a reasonable choice when the dominant work is network concurrency and the CPU-heavy slice is either absent or isolated away from the event loop. The decisive question is simple: where does the expensive work actually happen?

The laptop objection misses how software repeats. A helper script on one notebook is trivial. The same logic copied into test loops, CI jobs, desktop agents, scheduled tasks, and production workers becomes a fleet pattern. Once the waste repeats across days, users, and machines, the small local inefficiency stops being local and starts behaving like infrastructure.

The carbon mechanism is simple, which is exactly why teams underestimate it. The Software Carbon Intensity specification treats operational emissions as electricity use multiplied by the carbon intensity of that electricity. If a service needs more CPU time, more reserved hardware, or more replicas to deliver the same functional unit, emissions rise because the machine has to draw more power to finish the same job.

Green-software discussions get cleaner once they focus on energy per unit of useful work. If one service can answer a request, process a batch, or score a document with less electricity than another service on comparable hardware, it starts with a structural emissions advantage before anyone talks about carbon-aware scheduling or a cleaner grid. Those later improvements still matter. They operate on top of the first arithmetic decision, which is how much electricity the software needs to produce the answer at all.

That logic applies from laptops to clusters. The scale changes, while the mechanism does not. A low-duty-cycle internal tool may never justify a runtime rewrite. An always-on service that keeps CPUs hot all day is already telling you that runtime choice belongs in the environmental discussion as much as it belongs in the cost and latency discussion.

The benchmark literature is strong enough to support a narrow conclusion. C# sits in a much better efficiency tier than Python and it usually lands ahead of JavaScript or Node.js for compute-heavy work, even though it does not rival the top native languages.

The 2021 paper gives the cleanest high-level ranking for this article because it measures energy, time, and memory across a large language set on one hardware stack using RAPL-based energy measurements. Its global normalized averages put C# at 3.14 for energy and 3.14 for time. JavaScript comes in at 4.45 for energy and 6.52 for time. TypeScript lands at 21.50 for energy and 46.20 for time. Python lands at 75.88 for energy and 71.90 for time. Lower numbers are better because the study normalizes results to the most efficient language in each category.

These are normalized benchmark scores rather than your exact watt-hours. That is the right way to read them. The study shows relative distance under one controlled suite, and the gap is large enough that the ranking still matters after you allow for workload noise and benchmark-specific quirks.

The same paper also groups languages by implementation style and finds a broad tier pattern. Compiled languages averaged 120 joules across the benchmark set, virtual-machine languages averaged 576 joules, and interpreted languages averaged 2365 joules. C# matters inside that picture because it gives teams a managed-language path that stays far closer to the efficient side of the ranking than Python or a TypeScript or Node.js stack in the same benchmark universe, which is the practical argument most business teams need when they are choosing among productive mainstream runtimes and are not about to jump all the way to C or Rust.

The paper also keeps us from saying anything lazy. C# does not top the whole table, because C, Rust, and C++ lead it there. Some workloads also weaken the simple story. The authors explicitly note that faster is usually linked to lower energy, then show exceptions where workload shape changes the result. In their regex-redux benchmark, interpreted languages such as TypeScript and JavaScript perform far better than their overall reputation suggests. That matters because it keeps the article tethered to reality. Language choice influences energy use strongly, yet workload shape still decides how far the influence travels.

They lose when the hot path stays inside the runtime. Python pays interpretation overhead on every repeated operation. Node.js pays whenever CPU-heavy work collides with a model optimized for I/O and has to be pushed into workers or separate services.

The runtime paper explains the mechanism in more operational terms. Across the LangBench suite, the authors found V8 or Node.js applications running 8.01 times slower on average than their C++ counterparts, while Python ran 29.50 times slower on average. They also found that these two runtimes scale poorly for CPU-heavy parallel work. Extra threads do not rescue a compute-bound workload when the runtime still serializes the expensive part or pays a large coordination penalty to move it elsewhere. At that point node.js vs python performance is no longer the final question. The sharper question is whether either runtime should own the hot path at all.

Python pays first for interpretation, because the hottest path stays inside an interpreter that has to decode and execute high-level operations again and again. That overhead becomes brutal once the same loop, comparison, parsing routine, or transformation runs often enough. Node.js pays in a different place. The default mental model around Node is asynchronous throughput, which is excellent when the system is waiting on network or disk I/O, while CPU-bound work follows a different story because the main event loop still has to spend real cycles performing the computation and those cycles block other work while they run.

Node’s own documentation says worker threads are useful for CPU-intensive JavaScript operations and do not help much with I/O-intensive work. That sentence matters because it quietly admits the real boundary. Once the workload is compute-heavy, the official answer is to move the work off the main thread, accept the extra complexity, and manage another layer of coordination. Teams can do that successfully, though they should still ask a sharper question first: why keep paying the coordination tax around a runtime that is a weak fit for the job?

TypeScript deserves careful handling here because TypeScript code converts to JavaScript and runs wherever JavaScript runs. That means TypeScript is not a separate carbon villain standing beside Node.js. The emitted JavaScript and the runtime that executes it decide the actual behavior. A TypeScript service on Node.js usually inherits the same V8 and worker-thread story as ordinary JavaScript. For this article, the clean language is simple: treat TypeScript as part of the JavaScript runtime stack, then judge the stack by the work it performs and the electricity it needs.

The hardline version breaks whenever the expensive work is somewhere else. Python around efficient native libraries is a different case. Node.js on I/O-heavy services is a different case. C# also stops short of the absolute efficiency tier occupied by native systems languages.

The strong anti-Python or anti-Node slogan falls apart in three common situations. The first is Python that mostly orchestrates native code. If the expensive matrix math, numerical optimization, codec, or database engine already lives in efficient C, C++, or Fortran under the hood, the runtime penalty of Python shrinks because Python is serving as glue around a faster core. In that case, a rewrite can still make sense, though the case must be measured instead of assumed.

The second exception is I/O-heavy Node.js. A service that spends most of its life waiting on sockets, files, queues, or remote APIs does not suddenly become wasteful because it uses JavaScript. The event loop model can still be a good operational fit. Trouble begins when teams keep the same runtime after the system has clearly crossed into CPU-heavy territory and then normalize the cost by adding more workers, more processes, or more hardware.

The third exception is the claim that C# is the greenest possible answer, because the benchmark literature puts native languages ahead of it. That does not weaken the article’s actual recommendation. Teams choosing among productive managed runtimes still need a runtime that keeps energy use under control. C# clears that bar far more convincingly than Python or Node.js on repeated CPU-heavy workloads, which is why it deserves the green-software argument even without winning the absolute world championship.

These exceptions matter for another reason, because they keep the argument out of culture-war territory. Teams still need a way to discuss runtime waste without turning the conversation into identity politics. Once the expensive path is obvious, clinging to the slower runtime becomes an infrastructure decision with environmental consequences attached.

Rewrite once the workload crosses a repeatable threshold. A good trigger is more than one CPU-hour per day for the same hot path, or sustained median hot-path CPU above sixty percent over a two-week window.

Use thresholds because they lower the emotional temperature of the conversation. A team should move from opinion to measurement once the same workload spends more than one CPU-hour per day on a repeatable path over a fourteen-day window, or when median hot-path CPU stays above sixty percent across the same window. On local or edge devices, use the same logic across a thirty-day period: if the app performs compute-heavy work every session or keeps background computation alive between sessions, the runtime choice deserves measurement because the waste repeats on every machine you ship.

The benchmark note needs named owners. Platform or SRE can own the harness and observation window. The backend lead can own the equivalent C# spike. Operations or sustainability can translate the result into energy per functional unit and the scale step it would avoid. Once those owners review the same note in the same sprint, the language argument stops drifting into taste and turns into a scheduling decision.

Scaling out a poor runtime fit can still be the correct short-term choice when deadlines are brutal. The problem begins when the short-term patch hardens into the operating model. At that point the company is buying electricity, compute reservation, and carbon exposure every day to preserve the original stack, and that trade keeps getting more expensive the longer the service stays hot.

Move quickly and keep the scope narrow. Define one functional unit, benchmark one hot path, and decide whether the measured gain is large enough to change the scaling plan before the current workaround becomes permanent.

Start with one hot path and one benchmark memo. Within two weeks, define the functional unit, capture current CPU time and throughput on the existing service, and implement a minimal C# version that performs the same work on comparable hardware. Ask the backend lead and platform lead to review the same memo before the next scale purchase or capacity plan, because the decision is whether the next engineering week goes into more replicas or into a narrow port of the hot path.

If the C# spike materially cuts CPU time or lets the team avoid the next planned scale step, fund the rewrite and keep the port narrow. If it does not, document the result and move on, because the hot path is somewhere else. That outcome still saves time. It prevents a rewrite driven by slogans and returns the team to the real bottleneck.

Runtime choice deserves the same seriousness teams already give to database design, caching, and query plans. A service that burns CPU all day should not be allowed to hide behind hype about developer convenience once the evidence is visible. C# will not solve every workload and it will not outrun every systems language. It remains one of the clearest practical choices when a mainstream managed runtime has to do the same job with less waste than Python or Node.js.

We turn good ideas into working products — with care, speed, and purpose.